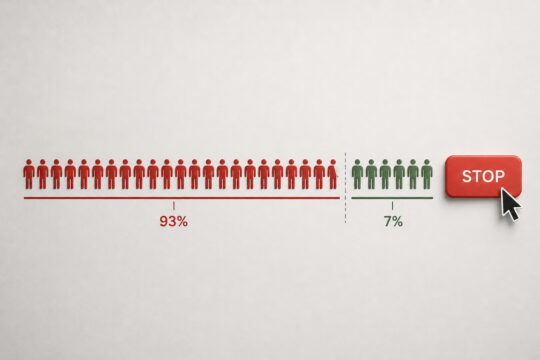

The same workforce in which 93% admitted to embellishing during the hiring process has also described, in specific terms, what a trustworthy screening process would look like. Employers trying to reduce candidate misrepresentation may already have the blueprint. It was written by the candidates gaming the system.

Key Takeaways

- Ninety-three percent of recent job seekers admitted to some form of embellishment, making misrepresentation a systemic issue rather than an individual one.

- The verification feedback loop persists because only 26% of embellishers were ever caught, making misrepresentation a low-risk calculation.

- Eighty-two percent of candidates want a clear explanation of what will be verified before they apply, which directly disrupts the assumption of minimal scrutiny.

- Human review of screening findings, requested by 81% of candidates, signals accountability in a way that fully automated processes do not.

- Consistent screening standards, requested by 75% of candidates, reduce the perception that rules can be bent and limit legal exposure under anti-discrimination frameworks.

- Transparency about AI use in screening, requested by 74% of candidates, closes an information gap that currently undermines trust on both sides.

- Independent, source-based verification of employment and education history closes the gaps that self-reported narratives leave open.

- The conditions candidates identify as trust-building align closely with what responsible, legally compliant screening programs already require.

Why the Detection Frame Misses the Point

Most organizations treat candidate misrepresentation as a detection problem. The thinking goes: better tools, faster turnarounds, more comprehensive searches will eventually close the gap. That thinking is understandable. It is also incomplete.

The Trust in Hiring Report surveyed 1,500 U.S. adults who had actively applied for at least one job in the prior 18 months. Ninety-three percent admitted to some form of embellishment or misrepresentation. Every generation. Every demographic group. Participation rates above 90% across all age cohorts. When a behavior is that widespread, the problem lives in the system, not in the people moving through it.

The more useful finding is not how many embellished. It is what they said would make them stop.

Why Detection Alone Falls Short

Among those who embellished, 53% did so because they believed employers would not verify everything. That belief held up. Only 26% reported that a discrepancy was actually found. Only 28% lost an opportunity over a detected exaggeration. When the odds of getting caught are that low, embellishment stops feeling like a risk. It starts feeling like the rational move.

The verification feedback loop runs on low expectations. Weak verification creates the expectation of weak verification, which gives candidates even less reason to be accurate. The loop feeds itself, and detection-focused fixes address the output without touching what drives it.

What the Data Actually Shows

Eighty-eight percent of respondents, including the 93% who admitted to embellishing, said candidate misrepresentation puts businesses at risk. These are not people who are indifferent to the problem. They are people caught between what the system rewards and what they know to be right. That tension matters when designing a response.

| Finding | Rate |

| Admitted to some form of embellishment | 93% |

| Acknowledged misrepresentation puts businesses at risk | 88% |

| Embellished because employers would not verify everything | 53% |

| Were actually caught | 26% |

The gap between 93% and 26% is where the verification feedback loop lives.

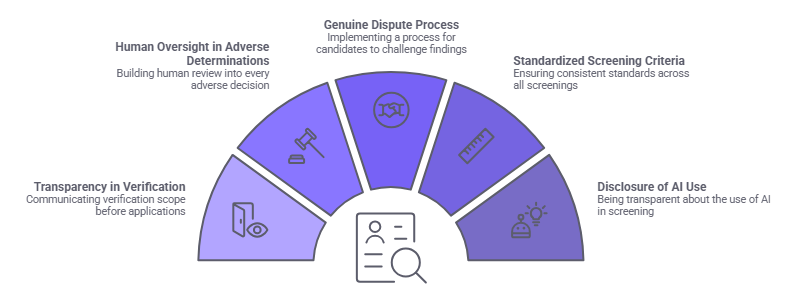

The Five Conditions That Break the Loop

The candidates in the Trust in Hiring Report did not just describe what they did. They described what would make them less likely to do it. Five conditions came back consistently across the full sample. Together, they form a practical blueprint for how to reduce candidate misrepresentation, built from the honest feedback of the people driving the problem.

Transparency About What Will Be Verified

Eighty-two percent of candidates want a clear explanation of what is being checked before the process begins. Not in a disclosure buried at the offer stage. Before the application is even submitted.

When candidates do not know what will be verified, 56% assume the answer is minimal. That assumption is where a significant portion of embellishment starts. When employers communicate verification scope clearly and early, that assumption loses its footing and the calculation shifts.

Putting verification expectations in job postings or on careers pages is one of the simplest, lowest-cost changes available. It shapes candidate behavior before the first resume is submitted.

Human Review of Screening Findings

Eighty-one percent of candidates want a human reviewing findings rather than a fully automated pipeline making the call. This preference is about accountability, not speed.

Fully automated processes send a signal, whether intended or not: nobody is really looking at this closely. That signal gives candidates less reason to be accurate. A process where a human reviews findings communicates the opposite. It signals that the results matter and someone is accountable for them. Automation is a valuable tool for eliminating repetitive work and surfacing information efficiently, and human judgment remains essential for consequential decisions. The Fair Credit Reporting Act's adverse action procedures, EEOC individualized assessment guidance, and a growing number of state and local requirements all support integrating human review into screening decisions. Organizations should verify applicable requirements in their jurisdiction with qualified legal counsel.

The Ability to Dispute Findings

Seventy-seven percent of candidates want the ability to review or dispute findings. Under the Fair Credit Reporting Act, candidates dispute inaccurate information directly with the consumer reporting agency that prepared the report. Employers support this right by providing the required pre-adverse action notice and report copy before any adverse decision is finalized, giving candidates the opportunity to address inaccuracies before the employer proceeds. Organizations should ensure their adverse action procedures are built to support this in practice.

| Candidate Demand | Support Rate | Process Implication |

| Clear explanation of what is checked | 82% | Communicate verification scope before application |

| Human review of findings | 81% | Build human determination into every adverse decision |

| Ability to dispute findings | 77% | Support the candidate's right to initiate a dispute before proceeding |

| Consistent standards for all candidates | 75% | Standardize criteria and documentation across all roles |

| Transparency about AI use | 74% | Disclose where and how AI is applied in the process |

Building dispute access into the process also reduces the stress candidates carry when they know their record may contain errors they were never given a chance to correct.

Consistent Standards Across All Candidates

Seventy-five percent of candidates want the same screening standards applied to everyone applying for the same role. This matters on two levels.

Legally, inconsistent standards create exposure. In the context of criminal history, EEOC enforcement guidance requires individualized assessment rather than blanket rules. Inconsistency in other areas may implicate Title VII or applicable state and local fair hiring laws. Organizations should consult qualified legal counsel and monitor current EEOC guidance, as standards continue to evolve. Behaviorally, inconsistency sends the wrong message. When candidates sense the process is applied unevenly, they reasonably conclude that the rules are flexible, and that conclusion makes embellishment feel lower-risk.

Standardizing screening criteria and adverse action documentation across all candidates for a given role reduces both the legal exposure and the behavioral signal that inconsistency creates.

Transparency About AI in the Screening Process

Seventy-four percent of candidates want to know whether AI is being used in their screening process and how. As AI tools expand into resume review, interview scoring, and candidate evaluation, the lack of visibility becomes its own problem. Candidates cannot respond to criteria they do not know exist.

Several jurisdictions have already enacted specific requirements governing automated decision-making tools in employment, and additional frameworks continue to develop. Organizations should verify current requirements in their operating jurisdictions with qualified legal counsel. Communicating clearly where AI is applied and how human review interacts with those outputs closes a gap that is quietly eroding trust on both sides.

What Independent Verification Actually Closes

The five conditions above address the behavioral and perceptual side of the problem. Independent, source-based verification addresses the structural side. Both matter, and they work on different parts of the same issue.

The Limits of Self-Reported Information

Nearly everything in the hiring process starts with what the candidate provides. Resumes, cover letters, interview stories, references. The employer evaluates what is in front of them. In most cases, the substance of what the candidate presented is never independently confirmed.

The most common embellishment behaviors in the Trust in Hiring Report were exaggerating skill expertise, reported by 61%, and inflating previous role scope, reported by 59%. Both are verifiable through direct contact with prior employers or credentialing institutions, but only when that verification actually happens. Reference manipulation adds another layer. Forty-five percent of candidates coached their references on what to say. Forty-one percent had a friend or family member pose as a professional reference. A reference check that does not verify the identity and relationship of the reference is not much of a check.

Source-Based Verification as a Structural Response

Validating employment history and education credentials directly from the source closes the gaps that self-reported information leaves open. The real question is whether verification is actually happening at that level, or whether the process is accepting representations the candidate has already had the opportunity to shape.

Direct employer verification, institution-confirmed credentials, and reference checks that confirm both identity and relationship produce a different quality of information than a process that takes the candidate's word for any of it.

Speed is worthless if the data is inaccurate. A fast process that does not actually verify anything is not a screening process. It is paperwork.

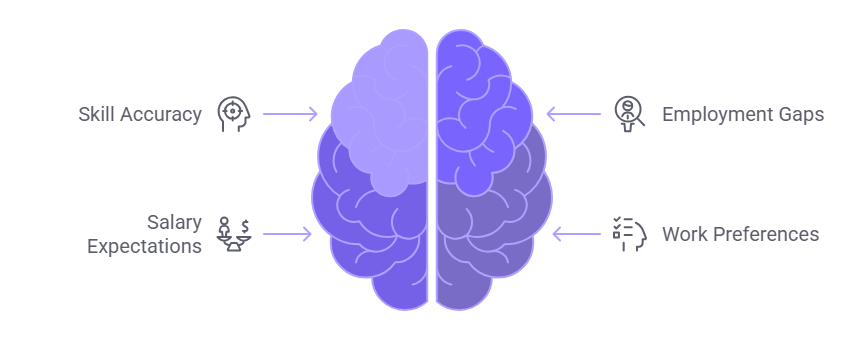

The Honesty Tax and Its Operational Consequences

The Trust in Hiring Report describes a pattern it calls the Honesty Tax. Candidates who present their qualifications accurately are more likely to be filtered out early. Candidates with embellished or AI-enhanced profiles are more likely to advance. Sixty percent of candidates said they would not have been hired if they had been fully honest.

How the Honesty Tax Shows Up in Practice

The Honesty Tax shows up at multiple points in the process, not just one.

- Skills described accurately get filtered against job requirements written for inflated claims.

- Honest employment gaps get penalized against constructed or adjusted timelines.

- Realistic salary expectations reduce leverage against candidates who have overstated prior compensation.

- Straightforward descriptions of work preferences get read as lack of fit against candidates who gave optimized answers.

At each of these points, the system penalizes accuracy and rewards optimization. Candidates pay the cost upfront. Organizations pay it later, in the form of mismatch, underperformance, and early exits.

The Organizational Cost of a Broken Signal

When hiring decisions are built on information that has been systematically optimized, the quality of that information degrades. The organization ends up evaluating candidates on their ability to present well in a process that rewards presentation over accuracy.

The downstream numbers from the Trust in Hiring Report make this concrete. Twenty-nine percent of candidates who embellished said their overstatement became apparent on the job. Twenty-five percent faced negative workplace consequences because their skills did not match their resume. Thirty-nine percent experienced post-hire stress or anxiety. These outcomes affect the candidate, but the organization's process helped create them.

Building the Process That Produces Different Outcomes

The conditions candidates identified as trust-building are not far from what well-designed, legally compliant screening programs already require. The gap is not between what candidates want and what the law expects. The gap is between what the law expects and what many organizations actually do consistently.

Aligning Process Design With Candidate-Stated Conditions

Each of the five candidate-stated conditions maps directly to responsible screening practice.

- Communicating verification scope before candidates apply addresses the 82% who want transparency and removes the assumption of minimal scrutiny that drives embellishment.

- Building human review into every adverse determination addresses the 81% who want human oversight. The Fair Credit Reporting Act requires a two-step adverse action process: a pre-adverse action notice with the consumer report and a summary of the candidate's rights, followed by a separate final adverse action notice if the employer proceeds after the waiting period. Organizations should consult qualified legal counsel to confirm their specific procedures meet current requirements.

- Implementing a genuine dispute process addresses the 77% who want to be able to challenge findings. Under the FCRA, candidates dispute inaccuracies directly with the consumer reporting agency. Employers support this right through proper pre-adverse action procedures.

- Standardizing screening criteria and documentation addresses the 75% who want consistent standards. In the context of criminal history, EEOC guidance requires individualized assessment. Standards for other screening components may vary by jurisdiction. Organizations should verify current requirements with qualified legal counsel.

- Disclosing AI use addresses the 74% who want that transparency. Several jurisdictions have enacted specific requirements on this point. Organizations should verify applicable rules in their operating locations.

Automation, Scale, and the Amplification Problem

Automation does not eliminate the need for human judgment. It changes where that judgment gets applied. When an automated system surfaces information, a trained reviewer still has to determine what it means, whether it is accurate, and what the right decision is. Gaps in systems or procedures do not stay small at scale. They multiply. An automated process with a structural flaw does not produce one problem. It produces that problem across every candidate that passes through it.

Human review is not an add-on. It is the layer that makes the process legally defensible and operationally sound.

Conclusion

The blueprint for reducing candidate misrepresentation came from the candidates themselves. Transparency, consistency, human oversight, a genuine dispute process. These are process decisions that align with what responsible screening already requires and what the data shows actually changes behavior.

About the 2026 Trust in Hiring Report

The 2026 Trust in Hiring Report is a proprietary research study published by GCheck, based on a national survey of 1,500 U.S. adults employed full-time who actively applied for at least one job in the past 18 months. Fielded February 14-22, 2026 via Pollfish, the study examines how Careerfishing, AI-assisted deception, identity concealment, and broken verification expectations are reshaping the employer-candidate trust gap. The report introduced the Careerfishing framework and documented that 93% of recent job seekers have engaged in at least one form of resume embellishment or misrepresentation. The full report, including methodology, demographic breakdowns, and the Compliance for Good framework for rebuilding trust in hiring, is available at gcheck.com/whitepapers/trust-in-hiring-report/.

Frequently Asked Questions

What does the verification feedback loop mean in hiring?

The verification feedback loop describes the self-reinforcing cycle in which weak employer verification creates the expectation of weak verification among candidates, which in turn incentivizes further embellishment. When candidates believe discrepancies will not be caught, misrepresentation becomes a low-risk calculation. The loop persists until the structural conditions that sustain it are changed through transparent, consistent, and independently verified screening practices.

How can employers reduce candidate misrepresentation without invasive screening?

The most effective interventions address process design rather than surveillance. Communicating what will be verified before candidates apply, validating credentials and employment history directly from the source, applying consistent standards across all candidates, and building genuine human review into the process all reduce the conditions that make embellishment rational. These steps also align with candidate-stated preferences for transparency and fairness.

Is human review of background check findings legally required?

The Fair Credit Reporting Act requires a two-step adverse action process. Before any adverse decision is finalized, employers must provide the candidate with a pre-adverse action notice, a copy of the consumer report, and a summary of their FCRA rights. A separate final adverse action notice is required if the employer proceeds. Human review is also strongly supported by EEOC individualized assessment guidance and a growing number of state and local requirements. Organizations should consult qualified legal counsel to confirm their specific procedures meet current requirements.

What are the legal risks of inconsistent screening standards?

Applying different screening criteria to different candidates for the same role can create legal exposure under federal anti-discrimination frameworks. In the context of criminal history, EEOC enforcement guidance requires individualized assessment rather than blanket disqualification rules. Inconsistency in other screening standards may implicate Title VII or applicable state and local fair hiring laws. Organizations should consult qualified legal counsel and verify current guidance, as applicable standards may evolve.

Why do candidates want transparency about AI use in screening?

Candidates cannot meaningfully engage with a process they do not understand. When AI tools are used to evaluate resumes, score interviews, or inform screening decisions without disclosure, candidates are subject to criteria they are unaware of and unable to address. Several jurisdictions have already enacted specific requirements governing automated employment decision tools. Organizations should verify applicable requirements in their operating locations with qualified legal counsel.

What is the Honesty Tax in hiring?

The Honesty Tax describes the structural disadvantage faced by candidates who present their qualifications accurately when competing against candidates who have embellished or optimized their profiles. When 60% of candidates believe full honesty would cost them the job, and when only 26% of embellishers are ever caught, the system functionally penalizes accuracy and rewards optimization. The downstream organizational costs include mismatch, underperformance, and early attrition.

How does source-based verification differ from standard background checks?

Source-based verification means validating employment history, educational credentials, and references directly with the issuing institution or prior employer, rather than relying on candidate-provided documentation or database records alone. This approach closes the gaps that self-reported narratives leave open and produces a higher quality of verified information. The difference matters because the most common embellishment behaviors, including inflated role scope and exaggerated credentials, are precisely the claims that source-based verification is designed to surface.

What should candidates expect from a transparent screening process?

Candidates should expect to be informed of what will be verified before the process begins, to have their information reviewed by a qualified human reviewer, and to receive the required pre-adverse action notice, report copy, and summary of rights before any adverse decision is finalized. A separate final adverse action notice is required if the employer proceeds. Organizations that build these features into their process design create the conditions under which trust in screening is more likely to develop on both sides.

Pat Hartonian

Chief Compliance Officer

Pat Hartonian is the Chief Compliance Officer at GCheck, where he leads compliance strategy and ensures every background screening program meets the highest standards of accuracy, regulatory alignment, and fairness. He brings over 15 years of executive experience in the background screening industry, with deep expertise in criminal records research, employment and education verification, and FCRA compliance frameworks.

Pat holds an , a certification in Generative AI and Large Language Models from AWS, and completed the CORe program from Harvard Business School Online. He is also the author of Decoding Humans: How Fear, Happiness, and AI Shape Every Decision We Make, where he explores the intersection of ethics, decision-making, and emerging technologies..