A national survey of 1,500 U.S. workers reveals that AI use in job applications is not simply a preparation tool. It is a statistically measurable amplifier of fraud across every deception category, from resume inflation to reference manipulation to live-interview impersonation.

Key Takeaways

- Candidates who use four or more AI tactics misrepresent their resumes at a rate of 98.8%, compared to 80.6% among those who use none.

- Heavy AI users average 6.6 resume embellishment types versus 2.8 for non-users, a gap that holds across every fraud category measured.

- Among 403 respondents who used AI during a live interview, the average misrepresentation count reached 7.1, the highest of any sub-group in the study.

- More than half of live-interview AI users, 51.1%, also used an AI avatar to impersonate themselves in a virtual meeting.

- Reference manipulation rates more than doubled between non-AI users (36.6%) and heavy AI users (83.1%), disproving the assumption that AI fraud is limited to resume polish.

- The assistance-to-impersonation spectrum is measurable: 61% of candidates used AI to make their answers sound more impressive than authentic, marking a clear inflection point between preparation and misrepresentation.

- Only 26% of candidates who embellished reported that their claims were actually verified and found discrepant, making AI-assisted fraud a rational, low-risk calculation under current screening conditions.

- Despite this behavior, 89.7% of heavy deceivers acknowledge that candidate misrepresentation creates risk for businesses, confirming that transparency and deterrence are the viable levers.

The Dose-Response Relationship Between AI Use and Hiring Fraud

The conventional concern about AI in job applications focuses on resume polish: inflated bullet points, AI-written cover letters, keyword-stuffed summaries. That framing underestimates the problem by a significant margin.

Data from the 2026 Trust in Hiring Report, a national survey of 1,500 U.S. full-time workers fielded in February 2026 via Pollfish, reveals something more structural. AI use does not simply add one new fraud vector. It predicts the intensity of fraud across every category simultaneously. The more AI tactics a candidate employs, the more they cheat, and that relationship holds across resume misrepresentation, reference manipulation, identity editing, and remote interview exploitation.

The Core Data: AI Intensity vs. Deception Across All Categories

The survey categorized respondents by AI usage intensity: no AI use (n=93), light AI use of one to three tactics (n=556), and heavy AI use of four or more tactics (n=851). The differences are not marginal.

| Behavior | No AI (n=93) | Light AI 1–3 (n=556) | Heavy AI 4+ (n=851) |

| Resume misrepresentation | 80.6% | 86.0% | 98.8% |

| Avg. embellishment types (of 13) | 2.8 | 3.4 | 6.6 |

| Reference manipulation | 36.6% | 46.8% | 83.1% |

| Remote interview exploitation | 54.8% | 60.6% | 89.9% |

| Identity editing | 63.4% | 70.9% | 91.8% |

Source: GCheck 2026 Trust in Hiring Report, n=1,500

Among non-users, 80.6% reported any resume misrepresentation, averaging 2.8 embellishment types across the 13 measured. Among heavy AI users, the misrepresentation rate climbed to 98.8%, with an average of 6.6 types. That is a 2.4-fold increase in the breadth of dishonesty, driven entirely by AI usage intensity.

What This Means for How Employers Frame the Problem

The dose-response structure matters because it reframes the problem entirely. AI hiring fraud is not a discrete behavior that some candidates engage in and others do not. It is a multiplier. Candidates who enter the AI deception ecosystem at any level tend to move deeper into it, and the data shows that movement is steep, consistent, and cross-categorical.

Employers who treat AI fraud as a resume-specific or technology-specific risk are measuring the wrong thing. The more accurate framing is that AI intensity is a predictive variable for the overall integrity posture of a candidate's application. A candidate using four or more AI tactics is statistically near-certain to be misrepresenting their background in multiple dimensions at once.

A Note on Data Interpretation

The findings are correlational, not causal. The survey captures self-reported behavior from a U.S. sample of active job seekers. It cannot establish whether AI use causes broader fraud, or whether candidates already inclined toward deception are more likely to adopt AI tools. Both dynamics may be operating simultaneously. What the data establishes with confidence is the association, and that association is strong enough to be operationally relevant.

The Live-Interview Sub-Population

The dose-response data establishes the pattern at the population level. The live-interview sub-population makes it concrete.

Who These Candidates Are

Among the 1,500 survey respondents, 403 (26.9%) reported using AI during a live interview, meaning in real time while the interview was in progress. This group represents the most extreme expression of AI-assisted deception in the dataset. Their average resume misrepresentation count was 7.1 types, the highest of any sub-group measured in the study. For context, the overall sample average was 5.2.

The behaviors within this group compound rapidly:

- 51.1% also used an AI avatar to impersonate themselves in a virtual meeting.

- 57.1% used AI to overstate qualifications on their resume.

- 93.3% exploited remote interview conditions in at least one additional way, including reviewing off-camera notes, receiving real-time assistance from another person, or having someone else complete a technical assessment.

These are not isolated behaviors. They are co-occurring patterns within the same candidates.

The Impersonation Threshold

The AI avatar finding warrants particular attention because it represents a categorical shift in what remote hiring verification can assume. A video interview has long been treated as a reasonable proxy for identity confirmation. The data indicates that one in four survey respondents overall used an AI-generated avatar in a virtual job meeting. Among live-interview AI users specifically, the rate exceeds half.

The data suggests that remote interview protocols that predate widespread AI generation tools may not be calibrated for the verification challenges the survey documents. Employers who have not revisited these protocols may benefit from assessing whether current practices reflect the environment the data describes.

Framing This Group Accurately

The live-interview sub-population is not an outlier. They represent 26.9% of active job seekers in the sample. In practical terms, in a competitive role receiving 200 applications, approximately 54 candidates in that pool may have used AI in real time during the interview itself. The screening question is not whether this is happening. The question is whether current processes would detect it.

The conversation around AI in hiring is often framed as a technology issue, but from an HR perspective, it is really becoming a trust and verification issue. What I found most striking is that AI-assisted misrepresentation hardly ever occurs in isolation. If candidates start lying in one place, then soon enough their lies will be spreading throughout different stages of recruitment process simultaneously. That is why strong hiring today is no longer just about screening for qualifications. It is about building processes that can still verify authenticity in an environment where presentation has become easier to manipulate.

The Assistance-to-Impersonation Spectrum

AI-assisted job searching is not inherently fraudulent. Writing a stronger cover letter, practicing interview answers, or tailoring a resume to a job description all fall within normal candidate preparation. The survey data is explicit on this point, and any treatment of AI hiring fraud that fails to draw this line will misrepresent both the problem and the population.

The relevant question is not whether candidates use AI. Nearly all of them do. The question is where preparation ends and misrepresentation begins.

Nine AI Behaviors, Three Zones

The survey measured nine distinct AI behaviors. They organize along a spectrum from preparation to active misrepresentation:

| Zone | Behavior | Prevalence |

| Preparation | Writing a cover letter | 54% |

| Preparation | Tailoring resume to job description | 50% |

| Inflection point | Making answers more impressive than authentic | 61% |

| Misrepresentation | Generating overstated resume bullets | 43% |

| Misrepresentation | Writing untrue application answers | 42% |

| Misrepresentation | Communicating with hiring managers via AI output | 36% |

| Misrepresentation | Real-time AI answer generation in live interviews | 27% |

| Misrepresentation | Using an AI avatar to impersonate the candidate | 25% |

Source: GCheck 2026 Trust in Hiring Report, n=1,500

The spectrum framing also matters for employer policy. Blanket prohibitions on AI use in applications are unlikely to be effective and may disadvantage candidates who use AI legitimately. What screening processes need to detect is not AI use per se, but the output divergence between a candidate's AI-assisted presentation and their verified actual qualifications.

The 61% Figure Is the Diagnostic Marker

The 61% who used AI to make answers more impressive than authentic is the most operationally useful statistic in this section. It does not describe a niche behavior. It describes the majority behavior, and it represents the moment at which employer risk materializes. From this point forward, the employer is evaluating a candidate whose presentation is partially or substantially AI-authored rather than reflective of their actual capabilities.

The Amplifier Effect: AI Does Not Replace Fraud, It Scales It

The most non-obvious finding in the dataset is not that AI users misrepresent their resumes more. It is that AI use amplifies every other form of deception simultaneously, including forms that have nothing to do with AI technology.

Reference Manipulation: The Clearest Cross-Category Signal

Reference manipulation is the starkest example. Among non-AI users, 36.6% reported manipulating references in at least one way, including coaching a reference on what to say, having a friend or family member pose as a professional reference, or asking a coworker to impersonate a manager. These are low-technology behaviors requiring no AI tool. They are simply dishonest.

Among heavy AI users, the reference manipulation rate was 83.1%. That is a 2.3-fold increase, driven entirely by the correlation with AI usage intensity, not by any AI-specific mechanism for faking references. Candidates who have adopted a high-intensity AI approach to their job search are also more likely to manipulate references, edit their identities, and exploit remote interview conditions, even though those behaviors involve no AI at all.

Identity Editing: The Same Pattern

The identity editing data follows the same curve. Non-AI users reported identity editing at 63.4%, while heavy AI users reported it at 91.8%. The behaviors within identity editing include hiding caregiving responsibilities, removing cultural or identity-related details from resumes, using a gender-neutral name, and altering appearance or communication style during interviews. None of these require AI. All of them are elevated among heavy AI users.

What the Amplifier Effect Means for Single-Point Verification

Employers who address AI hiring fraud by adding one targeted check are solving for one layer of a multi-layer problem. The data suggests that a candidate engaged in AI-assisted fraud is simultaneously more likely to have fabricated references, misrepresented work history, concealed relevant background information, and exploited the remote interview format. Single-point verification addresses one attack surface while leaving four others unchecked.

The Adversarial Dynamic: Employer AI vs. Candidate AI

There is a structural tension embedded in the current hiring environment that the survey data makes visible, even though it does not directly measure it.

Two AI Systems With Opposing Objectives

Most enterprise hiring processes now incorporate AI at multiple stages. Applicant tracking systems use AI to parse and rank resumes. Screening platforms use AI to flag keyword matches, assess fit, and surface candidates for human review. Some assessment tools use AI to analyze response patterns, tone, and consistency.

Candidates using AI tools in their job search are, whether deliberately or not, optimizing their applications for exactly these systems. A candidate who uses AI to tailor a resume to a job description is, in many cases, tailoring it to the ATS parsing logic that will evaluate it first. A candidate who uses AI to make interview answers more compelling is producing answers that may score better on the pattern-recognition systems designed to assess them. The result is an adversarial dynamic in which both sides are using AI, but only one side has disclosed it, and only one side is currently being screened for it.

Neither Side Knows the Other's Playbook

Employers generally do not communicate what their AI screening tools evaluate or how they weight different signals. Candidates generally do not disclose that their applications were substantially AI-generated. The absence of transparency on both sides means that employers are often evaluating a candidate's AI system rather than the candidate, without knowing that is what they are doing. This is not a solvable problem within any single screening checkpoint. It is a systemic dynamic that requires rethinking what verification is designed to confirm.

The Verification Gap This Creates

Current employer AI systems are optimized for efficiency, while candidate AI systems are optimized for persuasiveness. Efficiency and persuasiveness are not the same as accuracy. A highly persuasive AI-generated application that passes every automated screening checkpoint may still represent a candidate whose actual qualifications diverge significantly from their presented profile. Catching that divergence requires independent verification against primary sources, not pattern-matching against the AI-optimized application itself.

What AI-Era Verification Must Address

The data defines the problem with enough precision that certain requirements for any adequate response become evident. What follows reflects general considerations; specific verification obligations vary by jurisdiction, role type, and applicable law.

Five Deception Vectors, Not One

The dose-response data establishes that AI-assisted fraud operates across five simultaneous categories. An adequate verification framework may need to account for all five, because the amplifier effect means a candidate engaged in one is statistically likely to be engaged in several others.

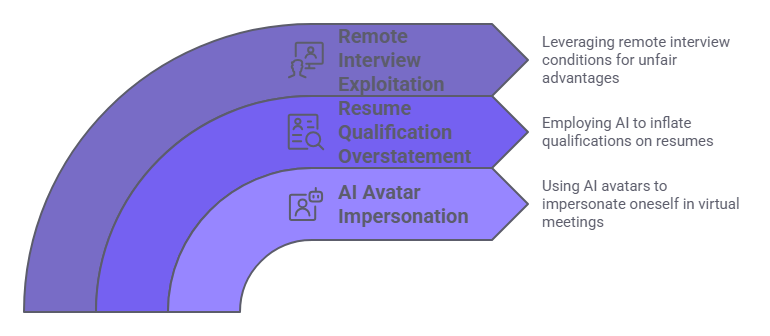

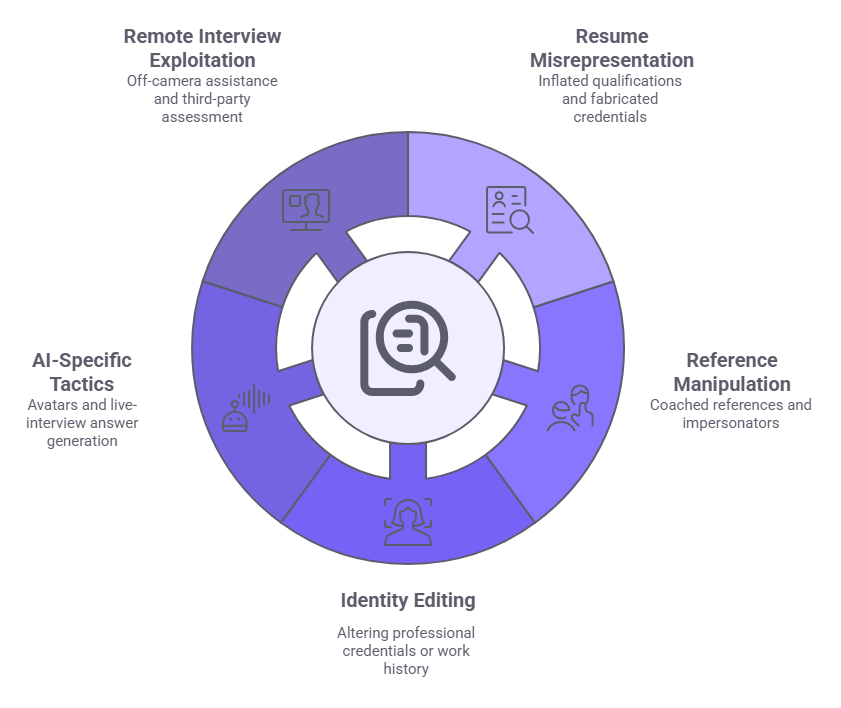

The five categories are:

- Resume misrepresentation, including inflated qualifications and fabricated credentials.

- Reference manipulation, including coached references and impersonators.

- Identity editing, to the extent it relates to verifiable professional credentials or work history, and subject to applicable anti-discrimination and privacy requirements.

- AI-specific tactics, including avatars and live-interview answer generation.

- Remote interview exploitation, including off-camera assistance and third-party assessment completion.

The failure mode of current screening is not that it misses everything. It is that it addresses one or two categories while leaving the others unexamined.

Reference Checks and Independent Verification

Among heavy AI users, 83.1% manipulated their references in some way. This means that for the sub-population generating the highest fraud risk, the reference check as traditionally conducted is largely compromised before it begins. A coached reference, a friend posing as a former manager, or a coworker impersonating a supervisor can all pass a conversational reference call without raising obvious flags. Verification against primary sources, meaning employment records, institutional records, and documented performance data rather than candidate-supplied references, is one approach organizations may consider when assessing the reliability of candidate-supplied reference information.

The 26% Detection Rate Is the Core Incentive Problem

Only 26% of candidates who embellished reported that their claims were actually verified and found discrepant. Only 28% lost an opportunity as a result of a detected exaggeration. When the probability of detection is this low, AI-assisted fraud is not a moral failing. It is a rational response to a low-risk environment. Employers who communicate clearly what will be verified may reduce the incentive for misrepresentation before candidates apply. Verification practices should remain consistent with applicable legal requirements, including individualized assessment obligations.

The Self-Aware Deceiver and What It Means for Deterrence

The final finding worth examining closely is also the most counterintuitive. The candidates most likely to commit AI hiring fraud are also the most likely to support the mechanisms designed to catch it.

Cognitive Dissonance at Scale

Among candidates who engaged in all five categories of deception simultaneously, a group that includes a substantial portion of heavy AI users, 89.7% acknowledged that their behavior creates business risk for employers. A majority of this same group supported ongoing background screening, wanted clear explanations of what screening would cover, and preferred human review over fully automated decisions. This is not contradictory. Candidates operating in a competitive labor market respond to the incentives that market creates. If AI-assisted exaggeration improves outcomes and detection rates are low, the calculation favors exaggeration.

Deterrence Is a Viable Lever

The self-awareness data has a specific operational implication. Deterrence works on rational actors. Candidates who are making cost-benefit calculations about whether to exaggerate will update that calculation when the probability of detection increases, or when employers communicate credibly that specific claims will be verified. The survey supports this directly. Among candidates who supported transparent screening processes, the most commonly cited trust-building factor was a clear explanation of what would be checked, cited by 82%. Employers who communicate verification standards upfront are introducing a deterrence signal at the point where it is most likely to change behavior.

The Candidate Who Wants the System Fixed

It is worth sitting with the implication of 89.7% of heavy deceivers acknowledging business risk. This is not a population that lacks conscience. It is a population that has concluded, based on market evidence, that honesty is a competitive disadvantage. The data suggests that if employers created conditions in which honesty was not a disadvantage, a substantial proportion of candidates would not choose deception. That points toward verification systems designed to reward accurate self-representation, rather than simply punish inflated claims.

Conclusion

AI hiring fraud is no longer a qualitative concern. It is a measurable, dose-dependent phenomenon with a data structure employers can act on. The dose-response relationship between AI intensity and cross-category deception defines the problem precisely: as AI use increases, fraud expands across every verification surface simultaneously. Adequate responses require multi-point verification designed for this environment, not single-point checks designed for a simpler one.

About the 2026 Trust in Hiring Report

The 2026 Trust in Hiring Report is a proprietary research study published by GCheck, based on a national survey of 1,500 U.S. adults employed full-time who actively applied for at least one job in the past 18 months. Fielded February 14-22, 2026 via Pollfish, the study examines how Careerfishing, AI-assisted deception, identity concealment, and broken verification expectations are reshaping the employer-candidate trust gap. The report introduced the Careerfishing framework and documented that 93% of recent job seekers have engaged in at least one form of resume embellishment or misrepresentation. The full report, including methodology, demographic breakdowns, and the Compliance for Good framework for rebuilding trust in hiring, is available at gcheck.com/whitepapers/trust-in-hiring-report/.

Frequently Asked Questions

What is AI hiring fraud?

AI hiring fraud refers to the use of artificial intelligence tools to misrepresent qualifications, fabricate application materials, manipulate references, or impersonate a candidate during the hiring process. It is distinct from legitimate AI-assisted job searching in that it produces a material gap between the candidate's presented and actual qualifications. Drafting cover letters or practicing answers does not constitute fraud. Generating false credentials or impersonating a candidate in a video interview does.

How common is AI fraud in job applications?

According to the 2026 Trust in Hiring Report, 98.8% of respondents who used four or more AI tactics in their job search also misrepresented their resume in at least one way. The survey was conducted with 1,500 U.S. full-time workers via Pollfish in February 2026. AI usage intensity was the strongest single predictor of fraud intensity across every deception category measured. The relationship held across resume misrepresentation, reference manipulation, identity editing, and remote interview exploitation.

How is AI used to cheat on job applications?

The survey identified nine specific AI behaviors, ranging from cover letter writing and resume tailoring at the preparation end, to generating false application answers and deploying AI avatars in video meetings. Among respondents, 61% used AI to make their answers sound more impressive than authentic. That figure represents the clearest inflection point between legitimate preparation and active misrepresentation in the data.

Can candidates use AI to fake a job interview?

Yes. Among the 1,500 survey respondents, 403 (26.9%) reported using AI during a live interview. Of this group, 51.1% also used an AI avatar to impersonate themselves in a virtual meeting, and 93.3% exploited remote interview conditions in at least one additional way. This group averaged the highest resume misrepresentation count of any sub-group in the study, at 7.1 types.

What is an AI avatar in a job interview?

An AI avatar in a job interview is a synthetic video representation of a person, generated using artificial intelligence, used to replace or overlay the candidate's actual appearance in a video call. The 2026 Trust in Hiring Report found that 25% of all survey respondents reported using an AI-generated avatar in a virtual job meeting. That finding represents a meaningful shift in what video-based identity verification can reliably confirm.

How can employers detect AI-assisted fraud in hiring?

Detection requires multi-point verification rather than single-point checks. Because AI-assisted fraud operates across resume misrepresentation, reference manipulation, identity editing, and remote interview exploitation simultaneously, addressing any one category in isolation leaves others unexamined. Independent verification against primary sources, controlled skills assessment, and structured interview protocols are more reliable than pattern-matching against AI-optimized materials. Specific detection methodologies vary by organization, role type, and jurisdiction, and employers should verify that their processes align with applicable law.

Why do candidates use AI to cheat even when they know it creates business risk?

The survey data offers a clear explanation. Only 26% of candidates who embellished reported that their claims were actually verified and found discrepant. When detection rates are this low, exaggeration represents a rational response to a low-risk environment, not a failure of character. The same survey found that 89.7% of heavy deceivers acknowledged their behavior creates business risk. Deterrence through transparent communication of what will be verified is likely more effective than attempting to catch fraud after application.

Does AI use in job applications always indicate fraud?

No. The survey distinguishes between AI-assisted preparation, which is common and largely legitimate, and AI-powered misrepresentation, which produces a material gap between presented and actual qualifications. The inflection point in the data is using AI to make answers more impressive than authentic. Blanket prohibitions on AI use are unlikely to be effective and may disadvantage candidates who use AI tools for legitimate preparation purposes.

Additional Resources

- U.S. Equal Employment Opportunity Commission. Enforcement Guidance on the Consideration of Arrest and Conviction Records in Employment Decisions

https://www.eeoc.gov/laws/guidance/enforcement-guidance-consideration-arrest-and-conviction-records-employment-decisions - Federal Trade Commission. Fair Credit Reporting Act (Full Text)

https://www.ftc.gov/legal-library/browse/statutes/fair-credit-reporting-act - U.S. Department of Labor. Employment Law Guide

https://webapps.dol.gov/elaws/elg/ - National Institute of Standards and Technology. AI Risk Management Framework

https://www.nist.gov/system/files/documents/2023/01/26/AI%20RMF%201.0.pdf

Charm Paz, CHRP

Recruiter & Editor

Charm Paz is an HR professional at GCheck, specializing in background screening, fair hiring, and regulatory compliance. She holds from the Professional Background Screening Association (PBSA) and helps organizations navigate employment regulations with clarity and confidence.

With a background in Industrial and Organizational Psychology, she translates policy into practice to build ethical, compliant, human-centered hiring systems that strengthen decision-making over time.