Deepfake interview fraud represents a low-frequency, high-impact risk in remote hiring, requiring HR teams to adopt observation-based detection protocols without enterprise-grade forensic tools. This guide provides practical red flags, procedural countermeasures, and compliance-safe response frameworks for talent acquisition professionals managing virtual candidate screening.

Key Takeaways

- Deepfake job interview fraud involves synthetic media manipulation where impostors use face-swapping or voice cloning to impersonate qualified candidates during video calls.

- Observable red flags include audio-visual sync issues, unnatural facial movements, inconsistent lighting across facial planes, and candidate refusal to perform spontaneous physical actions.

- Procedural countermeasures such as multi-session interviews, spontaneous verbal requests, and pre-established passphrases significantly reduce fraud risk without specialized technology.

- Technology-assisted detection tools range from liveness detection software to video forensic platforms, each carrying distinct cost, accuracy, and privacy implications.

- Legal compliance requires careful attention to biometric data laws, candidate notification obligations, and documentation standards when implementing detection protocols.

- Deepfake detection should integrate with existing identity verification and background check workflows rather than function as a standalone screening layer.

- Response protocols for suspected fraud must balance evidence preservation with wrongful accusation risks and legal consultation triggers.

- Most remote interview quality issues stem from technical limitations rather than fraud, requiring calibrated threat assessment to avoid operational paralysis.

Understanding the Deepfake Interview Threat Landscape

Deepfake technology uses artificial intelligence to create convincing synthetic audio and video content. Emerging reports from technology, finance, and remote-first organizations suggest that sophisticated impostors may leverage readily available tools to impersonate qualified candidates during video interviews. This threat differs substantially from traditional resume fraud in both execution complexity and detection difficulty.

The current threat landscape reflects low-frequency occurrence paired with significant potential impact. Unlike mass-scale fraud attempts, deepfake interview deception typically targets specific roles where remote work arrangements and competitive compensation justify the technical effort required.

| High-Risk Hiring Scenarios | Fraud Appeal Factors |

| Fully remote positions | No in-person verification checkpoint |

| Roles requiring specialized credentials | High value justifies technical effort |

| Positions with sensitive system access | Delayed post-hire verification |

| Competitive compensation packages | Strong financial incentive |

Organizations most commonly affected include those hiring for fully remote positions, roles requiring specialized credentials, or positions with access to sensitive systems and data. Risk assessment frameworks must ensure that detection protocols do not disproportionately burden candidates based on protected characteristics, work arrangement preferences, or geographic location.

Remote recruitment has unlocked tremendous potential, but it has also created risks that many HR departments are still learning to manage. While deepfake interview scams may be uncommon, they do, in fact, challenge a fundamental principle: that the individual on the screen is, in fact, who they claim to be. While the goal for recruiters is not to instill fear and make the interview process even more complicated, but to be mindful and intentional, asking unplanned questions and having multiple touchpoints can go a long way to help build authenticity. While trust is still a vital component of the modern recruitment environment, it is no longer enough on its own.

Defining Deepfake Interview Fraud

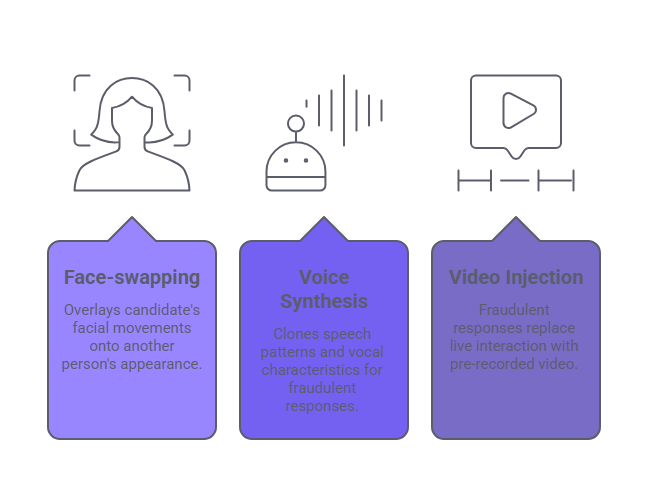

Deepfake interview fraud occurs when an individual uses synthetic media manipulation to present themselves as someone else during the hiring process. This typically involves three technical approaches:

- Face-swapping technology that overlays a candidate's facial movements onto another person's appearance

- Voice synthesis that clones speech patterns and vocal characteristics

- Pre-recorded video injection where fraudulent responses replace live interaction

The operational objective usually involves credential fraud, where an unqualified individual impersonates someone with legitimate degrees or certifications, or proxy interview schemes, where a qualified individual performs the interview on behalf of someone else who will actually assume the role.

Distinguishing Hype from Operational Risk

Media coverage of deepfake technology frequently emphasizes entertainment or political disinformation applications, creating inflated perception of hiring-related risk. HR professionals require realistic threat calibration that acknowledges documented incidents without assuming every remote interview presents fraud probability. Deepfake interview fraud demands more technical sophistication and preparation than most employment fraud schemes.

Organizations should approach how to detect deepfake interview fraud as one component within a broader identity verification and hiring integrity framework rather than an isolated crisis requiring wholesale process redesign. Security experts observe that technology sector positions, particularly software engineering and data science roles, may present elevated targeting risk due to remote work prevalence and delayed technical skills verification.

How Deepfake Interview Fraud Works: Mechanics for HR Teams

Understanding the technical mechanisms behind deepfake manipulation helps interviewers recognize what specific indicators to monitor during candidate interactions. This knowledge translates abstract AI concepts into observable phenomena that HR professionals without technical backgrounds can identify.

Face-Swapping Technology in Interview Contexts

Face-swapping represents the most commonly discussed deepfake technique, where algorithms map one person's facial expressions and movements onto another person's facial structure. In interview applications, this allows a physically present individual to appear as the candidate whose credentials are being presented.

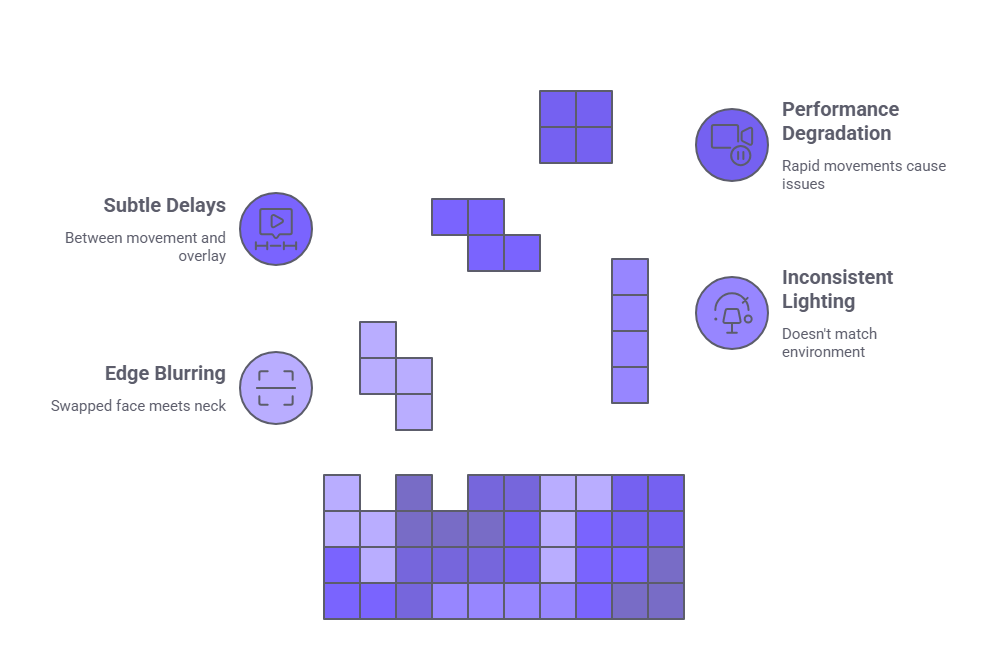

Real-time face-swapping introduces observable artifacts that trained interviewers can detect:

- Edge blurring where the swapped face meets the neck or hairline

- Inconsistent lighting that doesn't match environmental conditions

- Subtle delays between actual movement and the synthetic overlay

- Performance degradation with rapid head movements or extreme facial expressions

These systems struggle with consistent performance across extended timeframes, creating detection opportunities throughout interview duration.

Voice Synthesis and Pre-Recorded Video

Voice cloning technology can replicate speech patterns, accent characteristics, and vocal qualities after analyzing sample recordings. Audio-visual synchronization presents a persistent challenge for real-time voice synthesis. Interviewers may notice subtle delays between lip movements and corresponding sounds, or speech that lacks natural breathing patterns and micro-pauses.

Pre-recorded injection involves inserting pre-prepared video segments into the interview stream. This technique requires anticipating likely questions and preparing corresponding video segments. Responses may not precisely address the specific question asked, demonstrating semantic drift between inquiry and answer. Visual backgrounds remain static or loop noticeably, and candidates cannot respond to interruptions or spontaneous follow-up questions.

Observable Red Flags: How to Detect Deepfake Interview Indicators

Effective detection relies on systematic observation of specific indicators across visual, audio, and behavioral dimensions. HR professionals can implement these detection practices without specialized software by incorporating structured observation into standard interview protocols.

Visual and Facial Movement Anomalies

Unnatural facial movements represent one of the most reliable detection categories when learning how to detect deepfake interview attempts. Interviewers should monitor for several key indicators:

| Anomaly Type | Observable Characteristics |

| Facial expression mismatch | Smiles that don't extend to eye muscles, emotions appearing only in isolated facial areas |

| Edge artifacts | Blurring, color mismatches, or flickering where face meets hair, ears, or neck |

| Eye movement patterns | Unnaturally fixed gaze, mechanical blinking, missing micro-saccades |

| Resolution inconsistency | Some facial areas appearing sharper than others without technical justification |

Eye movement patterns offer particularly valuable detection signals. Current deepfake systems often struggle to perfectly replicate natural gaze behavior, including micro-saccades, blink timing variation, and the subtle eye movements that occur during cognitive processing, though technology capabilities continue to evolve.

Audio-Visual Synchronization and Lighting Issues

Lip-sync accuracy provides a fundamental detection checkpoint. Even sophisticated deepfake systems occasionally produce observable delays between mouth movements and corresponding audio, particularly during rapid speech or when candidates use technical terminology. Interviewers should pay special attention to plosive sounds and words with pronounced mouth shapes, where sync errors become most apparent.

Lighting provides reliable forensic indicators because replicating natural light physics across synthesized and real elements proves technically challenging. Interviewers should observe whether facial lighting direction and intensity match environmental lighting visible in the background. Shadow behavior during movement offers additional detection value, as natural shadows shift proportionally with head and body movement while synthesized overlays may produce shadows that lag or move unnaturally.

Behavioral Response Patterns

Beyond technical artifacts, behavioral patterns provide valuable detection signals. Candidates who consistently avoid direct eye contact with the camera, maintain rigidly fixed head positions, or demonstrate limited facial expressiveness may be attempting to minimize detection of synthesis artifacts.

Resistance to spontaneous requests represents a critical behavioral red flag when considering how to detect deepfake interview fraud. Legitimate candidates readily comply with reasonable requests like adjusting camera angles, moving to different lighting, or displaying identification documents to the camera. Refusal or elaborate explanations for why such actions aren't possible warrant skepticism. Response timing also merits attention, as systematic delays before every response may indicate pre-recorded content selection or synthesis processing time.

Procedural Countermeasures and Technology-Assisted Detection

Effective fraud prevention extends beyond detection to incorporate interview protocol elements that make successful deepfake execution significantly more difficult. These approaches work alongside appropriate technology solutions for organizations with elevated risk profiles. For example, incorporating simple verification steps within the standard interview process can help minimize the need for subjective judgment. However, organizations should also make sure that technology is used in a manner that is consistent with privacy standards and is proportionate to the role.

Spontaneous Request Integration

Building spontaneous actions into interview protocols creates authentication checkpoints that pre-recorded or scripted approaches cannot easily accommodate. Interviewers should incorporate unrehearsed requests requiring immediate physical response, such as asking candidates to hold up specific numbers of fingers, tilt their head to particular angles, or spell unusual words.

The timing and variety of spontaneous requests matter as much as their inclusion. Single predictable verification moments allow fraudsters to prepare transition points between live and synthesized content. Distributed spontaneous elements throughout the interview duration increase detection probability and fraud execution difficulty.

Multi-Session Architecture and Passphrase Systems

Conducting candidate evaluations across multiple sessions separated by time intervals significantly increases deepfake fraud difficulty. Each additional session requires maintaining consistent appearance, background environment, and technical setup, multiplying the opportunities for detection and increasing resource requirements for successful fraud.

Establishing unique verbal passphrases or visual signals during initial candidate contact creates authentication anchors for subsequent video interactions. These passphrases should be communicated through separate channels, such as asking candidates to confirm receipt via email then incorporate the phrase naturally during video interviews. Effective passphrases should be unique to each candidate, incorporate unusual word combinations, and be memorable enough for natural incorporation without reading from notes.

Technology-Assisted Detection Options

While procedural approaches provide substantial fraud mitigation, technology solutions offer additional detection capabilities for organizations conducting high-volume remote hiring or filling sensitive positions.

| Detection Tool Category | Primary Function | Key Limitations |

| Liveness detection systems | Analyze subtle characteristics like breathing patterns, eye motion, skin texture variations | Produces both false positives and false negatives, may disadvantage candidates with certain medical conditions |

| Video forensic analysis platforms | Examine compression patterns, metadata consistency, lighting physics for manipulation indicators | Requires post-interview analysis rather than real-time detection, needs specialized expertise |

| ATS-integrated verification | Automated consistency checking across multiple candidate interactions | Integration depth varies significantly, may create additional compliance obligations |

Organizations evaluating technology-assisted detection tools should prioritize accuracy metrics including both detection rates for known deepfake content and false positive rates that affect legitimate candidates. Privacy implications deserve careful consideration, as tools that collect biometric data may trigger compliance obligations under state biometric privacy laws or data protection regulations. Cost structures should account for total implementation expenses including software licensing, infrastructure requirements, and ongoing operational costs.

Legal Compliance and Integration with Background Checks

Implementing deepfake detection practices creates legal obligations and risk exposure that HR teams must address through appropriate policies, disclosures, and procedural safeguards. Understanding how this capability relates to traditional verification methods enables appropriate workflow sequencing.

Candidate Notification and Data Handling Requirements

Organizations using technology-based detection tools that collect biometric information should verify notification requirements based on applicable state laws, which vary significantly by jurisdiction. Many jurisdictions require clear notification before data collection occurs. Multiple states maintain biometric privacy laws with varying requirements for notification, consent, and data handling. Illinois' Biometric Information Privacy Act represents one framework requiring written consent, data retention policies, and specific destruction timelines. Organizations must verify applicable requirements based on candidate location, as state laws differ significantly in scope and obligations.

Interview recordings, biometric templates, and analysis results constitute sensitive personal information requiring appropriate security measures and retention limitations. Organizations should establish specific retention periods justified by legitimate business needs, such as retention during active investigation or pending legal proceedings, with documented destruction procedures once the retention purpose concludes. When detection systems operate through third-party vendors, contractual agreements must explicitly prohibit use of candidate data for purposes beyond the contracted screening service, including algorithm training, commercial analysis, or sale to third parties.

Discrimination Risks and Permissible Use

Detection systems demonstrating disparate accuracy across demographic groups create substantial discrimination risk under federal and state employment laws. Organizations must obtain demographic bias testing results from vendors before deployment, conduct ongoing monitoring of which candidates trigger alerts, and maintain documentation demonstrating that detection protocols do not disproportionately affect protected classes.

Wrongful accusation of fraud carries significant legal and reputational risk. Organizations must establish clear evidentiary standards before concluding that fraud occurred. Detection activities must serve legitimate hiring-related purposes rather than unauthorized surveillance. Organizations should clearly define at what hiring stage detection protocols apply, such as limiting active technological verification to finalist candidates for sensitive positions rather than applying it universally to all applicants.

Integration with Background Check Workflows

Deepfake detection functions most effectively as one component within comprehensive identity verification and background screening processes rather than as an isolated control. Finalist-stage verification, after initial screening but before final offers, represents a common approach that balances thoroughness with efficiency.

Background checks verify credentials, employment history, criminal records, and other substantive qualifications, while deepfake detection addresses whether the person claiming those qualifications is actually the individual being interviewed. Organizations should not assume that conducting background checks eliminates deepfake risk, as fraud schemes specifically involve individuals presenting legitimate credentials belonging to real people, which background checks will verify as accurate.

Optimal workflows conduct identity verification including deepfake detection before initiating background checks. This sequencing avoids expending background check resources on individuals whose identity remains unconfirmed. Once identity confidence exists, background checks then verify the substantive qualifications that person claims.

Response Protocols and Avoiding Common Misconceptions

Establishing clear procedures for handling suspected fraud protects both organizational interests and candidate rights while managing legal exposure. Equally important is distinguishing between legitimate technical issues and actual fraud indicators to avoid operational paralysis.

Handling Suspected Fraud

Organizations should designate specific roles responsible for fraud investigation rather than leaving decisions to individual interviewers. HR compliance officers, legal counsel, or security personnel typically possess appropriate expertise and authority for these determinations. When interviewers observe potential indicators, initial escalation should focus on describing specific observations rather than conclusions.

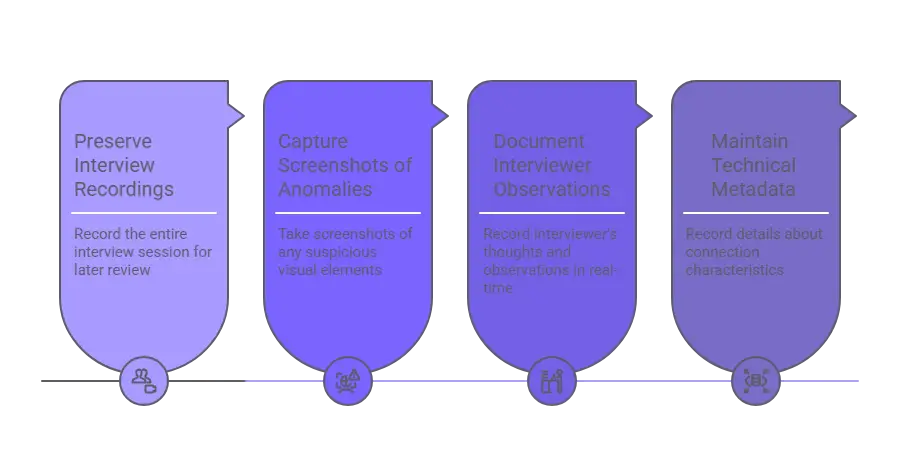

Suspected fraud situations require careful evidence documentation:

- Preserve complete interview recordings

- Capture screenshots of specific anomalies

- Document interviewer observations contemporaneously

- Maintain technical metadata about connection characteristics

Organizations recording interviews for fraud detection must provide clear notification to candidates before recording begins. Organizations must verify whether applicable state laws require single-party or all-party consent for recording, and obtain documented consent that satisfies the strictest applicable standard based on participant locations. Determining whether and how to communicate fraud suspicions to candidates requires careful judgment balancing investigation effectiveness against wrongful accusation risk.

Distinguishing Technical Issues from Fraud

Poor video quality, audio synchronization problems, and inconsistent lighting frequently result from legitimate technical limitations rather than fraud attempts. Consumer-grade webcams, bandwidth constraints, older computers, and suboptimal home office environments produce artifacts that superficially resemble deepfake indicators.

| Observation | Innocent Explanation | Fraud Indicator |

| Audio delay | Network bandwidth constraints, processing limitations | Consistent delay specifically during speech with no other connection issues |

| Rigid posture | Interview anxiety, ergonomic constraints | Persistent rigidity combined with refusal of spontaneous movement requests |

| Lighting inconsistency | Window placement, laptop webcam with desk lamp | Facial lighting direction completely opposite to visible room lighting |

| Limited expressiveness | Cultural communication norms, stress response | Mechanical or absent expressions combined with other technical anomalies |

Candidates experiencing interview stress may demonstrate behaviors that overlap with fraud indicators, such as avoiding direct eye contact, maintaining rigid posture, or pausing extensively before answering questions. Nervous behaviors typically moderate as interviews progress and candidates become comfortable, while fraud-driven behaviors remain consistent throughout interactions.

Calibrating Threat Response

Media coverage of deepfake technology capabilities creates inflated perception of fraud prevalence in hiring contexts. Most organizations conducting remote interviews never encounter deepfake fraud attempts. Realistic risk assessment considers organizational factors affecting target attractiveness to fraudsters, with fully remote positions offering above-market compensation presenting higher risk than on-site roles with immediate credential verification.

Organizations should calibrate detection intensity to actual risk profile rather than implementing uniform maximum security approaches across all hiring. Verification requests should be framed as standard security measures applicable to all finalists rather than targeted demands suggesting individual suspicion. Detection protocols must include accommodation processes for candidates who cannot comply with standard verification requests for legitimate reasons, avoiding accessibility barriers that create legal risk and inequitable hiring barriers.

Conclusion

Deepfake interview fraud represents a manageable risk when organizations implement observation-based detection protocols, procedural countermeasures, and appropriate technology assistance calibrated to their threat landscape. Effective approaches combine interviewer training on visual and behavioral indicators with spontaneous verification elements and multi-session structures, supported where justified by liveness detection or forensic analysis tools while respecting privacy boundaries and maintaining positive candidate experience.

Frequently Asked Questions

What is a deepfake interview and how common are they in hiring?

A deepfake interview occurs when a candidate uses artificial intelligence-powered face-swapping, voice synthesis, or pre-recorded video to impersonate another individual during video screening. Current prevalence remains low, with documented cases concentrated in remote technology and financial services positions. The risk appears highest for fully remote roles offering competitive compensation where credential verification occurs post-hire.

Can I detect deepfake interviews without specialized software?

Yes, trained interviewers can identify many deepfake attempts through systematic observation of audio-visual synchronization, lighting consistency, facial movement naturalness, and candidate responses to spontaneous requests. Observable indicators include lip-sync delays, edge artifacts where facial features meet hair or neck, and unnatural eye movements. Procedural approaches incorporating unpredictable spontaneous elements significantly increase detection probability without requiring technology investment.

What should I do if I suspect a candidate is using deepfake technology?

Escalate concerns to designated HR compliance officers, legal counsel, or security personnel rather than making independent fraud determinations. Request additional identity verification through supplementary interview sessions or modified protocols with increased spontaneous elements without explicitly stating fraud suspicions unless evidence reaches high confidence thresholds. Preserve complete interview recordings and technical metadata, and consult legal counsel before communicating fraud determinations to candidates.

Are there legal restrictions on using deepfake detection technology in hiring?

Yes, detection systems collecting biometric information typically trigger state-specific notification and consent requirements, particularly in jurisdictions with explicit biometric privacy laws. Organizations must provide clear disclosures about what data collection occurs, how information will be used, retention timeframes, and security measures before implementing biometric verification. Detection systems demonstrating disparate accuracy across demographic groups create substantial discrimination risk requiring bias testing and ongoing monitoring.

How does deepfake detection fit with traditional background checks?

Deepfake detection and background checks serve complementary rather than overlapping purposes within identity verification workflows. Background checks verify credentials and employment history belonging to the claimed identity, while deepfake detection confirms the interview participant actually is that person. Optimal sequencing conducts identity verification including deepfake detection before initiating background checks to avoid expending screening resources on individuals whose identity remains unconfirmed.

What are the most reliable indicators for identifying deepfake interviews?

Candidate inability or refusal to comply with reasonable spontaneous physical requests may indicate potential fraud, though organizations must consider legitimate reasons for non-compliance, including accessibility needs, technical limitations, or privacy concerns. Technical indicators include audio-visual synchronization problems during rapid speech, lighting direction on facial features inconsistent with visible environmental lighting, and unnatural eye movement patterns. Multi-session interview approaches enable cross-session consistency verification of environmental details.

How can small organizations implement deepfake detection with limited resources?

Small organizations can achieve substantial fraud mitigation through procedural approaches requiring minimal technology investment, including multi-session interview structures and incorporation of spontaneous verification requests throughout interviews. Training interviewers to recognize basic audio-visual indicators and establishing clear escalation procedures for suspected fraud provides foundational capability. Risk-based approaches focusing intensive verification on finalist candidates for sensitive positions enable appropriate resource allocation.

What privacy considerations apply when recording interviews for fraud analysis?

Organizations recording interviews for fraud detection must provide clear notification to candidates before recording begins and verify whether applicable state laws require single-party or all-party consent. Recordings constitute sensitive personal information requiring security measures preventing unauthorized access, with policies addressing who may view recordings and for what purposes. When using third-party analysis vendors, contractual agreements must prohibit use of candidate data beyond immediate verification services.

Additional Resources

- Deepfakes and Synthetic Media: What Communicators Need to Know

https://www.ftc.gov/business-guidance/blog/2021/03/deep-fake-or-fake-deep - Using Artificial Intelligence and Algorithms in Hiring

https://www.eeoc.gov/laws/guidance/using-artificial-intelligence-and-algorithms - Fair Credit Reporting Act Overview

https://www.ftc.gov/legal-library/browse/statutes/fair-credit-reporting-act - Biometric Information Privacy Act Requirements

https://www.ilga.gov/legislation/ilcs/ilcs3.asp?ActID=3004 - NIST Digital Identity Guidelines: Biometric Requirements

https://pages.nist.gov/800-63-3/sp800-63b.html

Charm Paz, CHRP

Recruiter & Editor

Charm Paz is an HR and compliance professional at GCheck, working at the intersection of background screening, fair hiring, and regulatory compliance. She holds both FCRA Core and FCRA Advanced certifications through the Professional Background Screening Association (PBSA) and supports organizations in navigating complex employment regulations with clarity and confidence.

With a background in Industrial and Organizational Psychology and hands-on experience translating policy into practice, Charm focuses on building ethical, compliant, and human-centered hiring systems that strengthen decision-making and support long-term organizational health.